Annnnd, We’re Back!

Welcome back to Theoretically News!

Sorry, it's been a minute! I did miss a few weeks of the newsletter, mainly due to poor planning on my part. The good news is: We're back on track, largely thanks in part to a Claude project (no, Claude is not writing this newsletter, but is proofreading it!) that I'll discuss down below!

OK, let's get back on track!

NEWS

Adobe CEO is stepping down. What’s that say?

Big news out of Adobe this week. After 18 years at the helm, CEO Shantanu Narayen is stepping down.

Now, the timing here is interesting — because the announcement dropped alongside Adobe's Q1 earnings, which actually beat estimates across the board. Revenue: $6.39 billion and Subscription revenue up 12%.

But— Stock still dropped ~8% in after-hours.

Because of course it did.

To give credit where it is due — Narayen's run was impressive. Under his watch, Adobe went from under $1 billion in revenue to over $25 billion. Employees grew from 3,000 to 30,000+.

Granted, it was during his run that Creative Cloud and subscription services became a thing, and look— I know that is still a sore spot for many users.

And just to paint a picture: stock is down ~23% in 2026 alone and sitting more than 60% off its 2021 high — despite record revenue. The market is clearly spooked about whether AI eats the Adobe business model, and you can understand why. Competitors from Freepik to Canva are all chipping away at workflows that used to begin and end inside Adobe.

That said, it's not like they've been asleep. Firefly has generated over 12 billion images since launch. Their AI-related revenue more than tripled year-over-year, exceeding $250 million. They've been embedding AI features across Photoshop, Illustrator, Premiere Pro — the whole suite. 850 million monthly users across their products— and that’s up 17%.

My Take: There's no question that Adobe was late to the AI pivot. And I think their biggest ongoing struggle has been messaging — trying to court two very different userbases at the same time.

On one side, you've got the traditionalists who are wary of Adobe training on their data and images — despite Adobe consistently messaging that they aren't. On the other side, you've got creators who are fully embracing AI tools and wandering out of the Adobe ecosystem to adopt third-party generators and develop the kinds of trending workflows that platforms like Freepik have been running with.

The result? Mixed messaging that doesn't fully win anywhere.

And I'm not knocking them — I've glimpsed the inside of Adobe. It's a big ship. So maybe a new captain is exactly what they need to set a new course.

Now, many will proclaim this as the death of Adobe. But don't forget — this is a multi-billion dollar company. They aren't going anywhere anytime soon.

The big question is: Who will be at the helm, and what course will they plot?

NEWS

Meta's "Avocado" Goes Brown.

Meta's next-gen AI model, code-named "Avocado," has been delayed. Pushed from a mid-March launch to at least May, possibly June.

As reported by the New York Times, internal testing showed that while Avocado beat Meta's previous models and Google's Gemini 2.5, it could not match Gemini 3.0, OpenAI's latest, or Anthropic's latest. The weak spots? Logical reasoning, programming, and writing.

The solution: Take that Avocado and turn it into Guacamole.

According to reports, Meta's leadership actually discussed temporarily licensing Gemini from Google to power Meta's AI products.

Let that sit for a second. They’re spending between $115 billion and $135 billion on AI infrastructure in 2026 — and they’re looking to rent.

Why It Matters: Avocado isn't just a chatbot model. It's meant to be Meta's foundational model — the base for everything, including their image and video generation ambitions. Although they're also building a separate model code-named "Mango" for image and video gen.

And while Mangos and Avacados sound awesome for a vacation, I think this is also showing the old school Google problem of lacking focus. Right hand, Left hand and all that.

My Take: In a space where Anthropic and OpenAI are shipping model updates at a relentless pace — and ByteDance is gearing up for its own next-gen launches, Meta is clearly losing the hype cycle game. The big question is: will rented land buy them enough time to catch up?

New Model

PixVerse Gets a Bag! $300M for AI Video

Back on our side of the fence:

PixVerse just closed a $300 million Series C, valuing the company at over $1 billion — making this the largest funding round in Asia's AI video generation category.

The round was led by CDH Investments, with previous backing from Alibaba (who led their $60M Series B last September). The company claims over 100 million users across 175 countries, 16 million MAU, and over 2.1 billion videos generated on the platform.

Now, PixVerse has been kicking around for awhile. I’ll generiously say it’s never been a flagship platform — but it does surface on occasion with some pretty interesting stuff.

I recently covered their R1 real-time video generator, and yes: While it’s still kinda janky (hilariously so) it does show promise. And their core model, V5.6, ranked #2 globally for both I2V and T2V on Artificial Analysis as of late February. Granted, I tend to side-eye arena rankings— but hey, still nothing to sneeze at.

My Take: The interesting question is: could an extra $300 million bring PixVerse up to the big guns of Kling, Seedance, and Veo? We'll see. But more competition in the AI video space is always a good thing.

Prompt Share

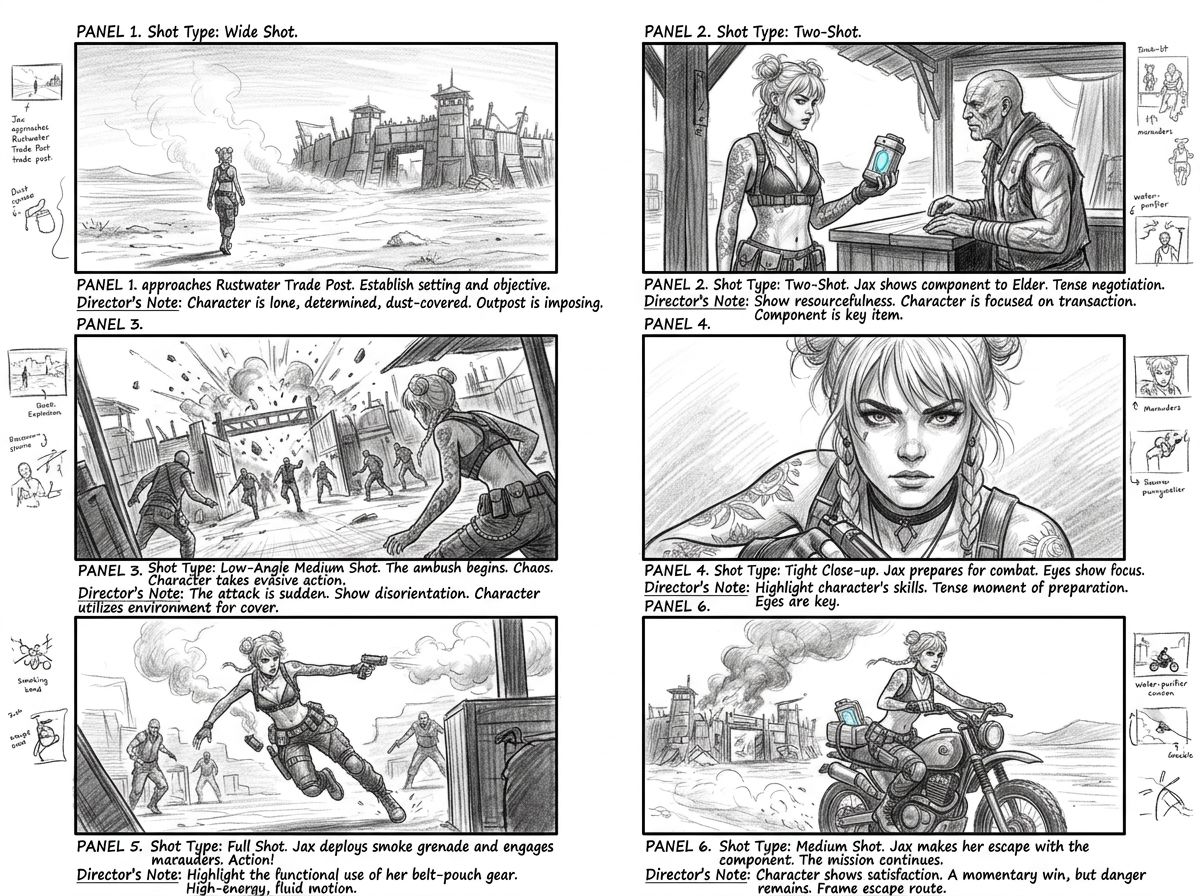

Instant Storyboards

A prompt share from Glenn Williams , for a cool Nanobanana 2 storyboard output. The concept is simple: feed Nanobanana 2 a character reference image (or many more references) along with a prompt that asks for a six-panel storyboard — complete with camera angle labels, shot types, and director's notes in the margins.

[Image reference/object/person] as a [movie/TV show genre] movie storyboard, six sequential panels, pencil sketch with tonal shading, cinematic camera angles, shot type labels, director's notes in margins, 4K storyboard sheet.

Swap in your character reference, pick your genre, and let it rip. You'll want to experiment with the genre tag — it really does shift the tone and shot selection.

Now, I know many of you were initially unimpressed with Nanobanana 2 when it launched. But this is a good example of how a lot of the model's potential remains to be explored.

This method in particular might be interesting to use with some of the new Omni Video models. Give it a shot!

COOL THING OF THE WEEK

Claude Makes Videos?!

A little bit of a cheat here, since I actually talked about this in one of this week's videos — but it was buried in the back end of a ComfyUI video, so I'm not sure everyone caught it.

Did you know that Claude can make videos?

Now, I don't mean Kling or Seedance kind of cinematic outputs. These are more like… polygon-y, kind of Video Toaster graphic outputs. But they're surprisingly fun to play around with!

If you want to check it out here!

If you'd rather just jump in, head over to Claude and ask it:

Using Python and FFMPEG, make me a video about [whatever you want]

Speaking of Claude — as mentioned above: yes, I have been Claude-pilled pretty hard.

I haven't gone completely off the deep end running 38 Agents or anything, but I have built up a v.1 office/task assistant that is running… pretty well? I mean, I have a weekend project of tearing it down and rebuilding it. But I gotta say — even the janky v.1 version managed to spot some slots in my week— enough to carve a hole in my schedule to make sure I could get back on track with the newsletter!

I've got some thoughts on making a "Claude is the best LLM for Creative Work" video, but I need to get some more reps in before I do.

That said, if you haven't tried Claude out yet: I do suggest you give it a whirl. More and more I'm seeing posts and articles saying "I just discovered this crazy thing Claude can do" — which is a general feeling of user excitement that I haven't really felt from, say, OpenAI lately.

FROM THE STUDIO

What I Covered This Week

It was basically Open Source Week on the channel!

First up, LTX released LTX Desktop — a free, open-source, locally run video editor that can generate video right on the timeline. The video covers installing it, system requirements (which are high), plus a full walkthrough of the software.

Now, I do know that many of you had installation issues — and I'm happy to say that LTX has released a version 1.2, so they are actively working on quashing bugs. Overall, if you're someone that enjoys tinkering with open-source software, give this one a download. If not? Maybe hold off until v.1.5 or 2…

If you want to check out the video: https://youtu.be/p6pBez477Ys

The other big video was about ComfyUI's new App mode. This one simplifies the node-based spaghetti into an "easy to use" menu. As I mentioned in the video, it's great to see ComfyUI rounding off some of those sharp edges, giving new users a little more footing for an entry point.

If you've ever been Comfy Curious but overwhelmed, I think this is a good time to check in: https://youtu.be/SAjKSRRJstQ

THAT’S A WRAP!

And we’re back on track!

If you have any feedback or anything you’d like to see, please let me know: [email protected]

Or, just drop a comment on one of the videos! I honestly do see them all!