The Quick Hits!

Hey gang! Welcome back to Theoretically News! This issue is a bit of a quickie—I just returned from an event in NYC last night (I’ll talk about that next week), but due to travel and a late night, I didn’t have as much time for the newsletter.

BUT, I didn’t want to let a week slide, especially this early in!

With that out of the way, let’s dive in!

NEWS

Hollywood Launches “Anti-AI” Campaign.

Celebrities including Scarlett Johansson, Cate Blanchett, and Joseph Gordon-Levitt are among the faces of a new campaign targeting tech companies for training generative AI tools on copyrighted works without express permission.

The “Stealing Isn’t Innovation” campaign is from the Human Artistry Campaign, which launched this new initiative this week. While many of the headlines about it focus on a very anti-AI slant, when you actually dig into it, the campaign is really more about licensing.

"A better way exists - through licensing deals and partnerships, some AI companies have taken the responsible, ethical route to obtaining the content and materials they wish to use. It is possible to have it all. We can have advanced, rapidly developing AI and ensure creators' rights are respected."

My Take: To be fair, while a lot of the rhetoric and hot takes around this story feel like they harken back to the “You Wouldn’t Steal A Car” campaign from Warner Bros. in the early days of file sharing, when you dig into the Human Artistry Campaign literature, it does become clear this isn’t about hamstringing AI Video.

Rather, it’s a shift in tactics from “Sue ‘em” to “Sign ‘em” that echoes moves the recording industry has made with platforms like Suno and Udio.

How this strategy plays out, and the ramifications for larger platforms like Sora or Google’s Flow, remains to be seen. But expect this to become a larger story through 2026.

At the end of the day, despite some moral grandstanding language in the campaign, I’m reminded of an old quote: “What’s the answer to 99 out of 100 questions? …Money.”

NEWS

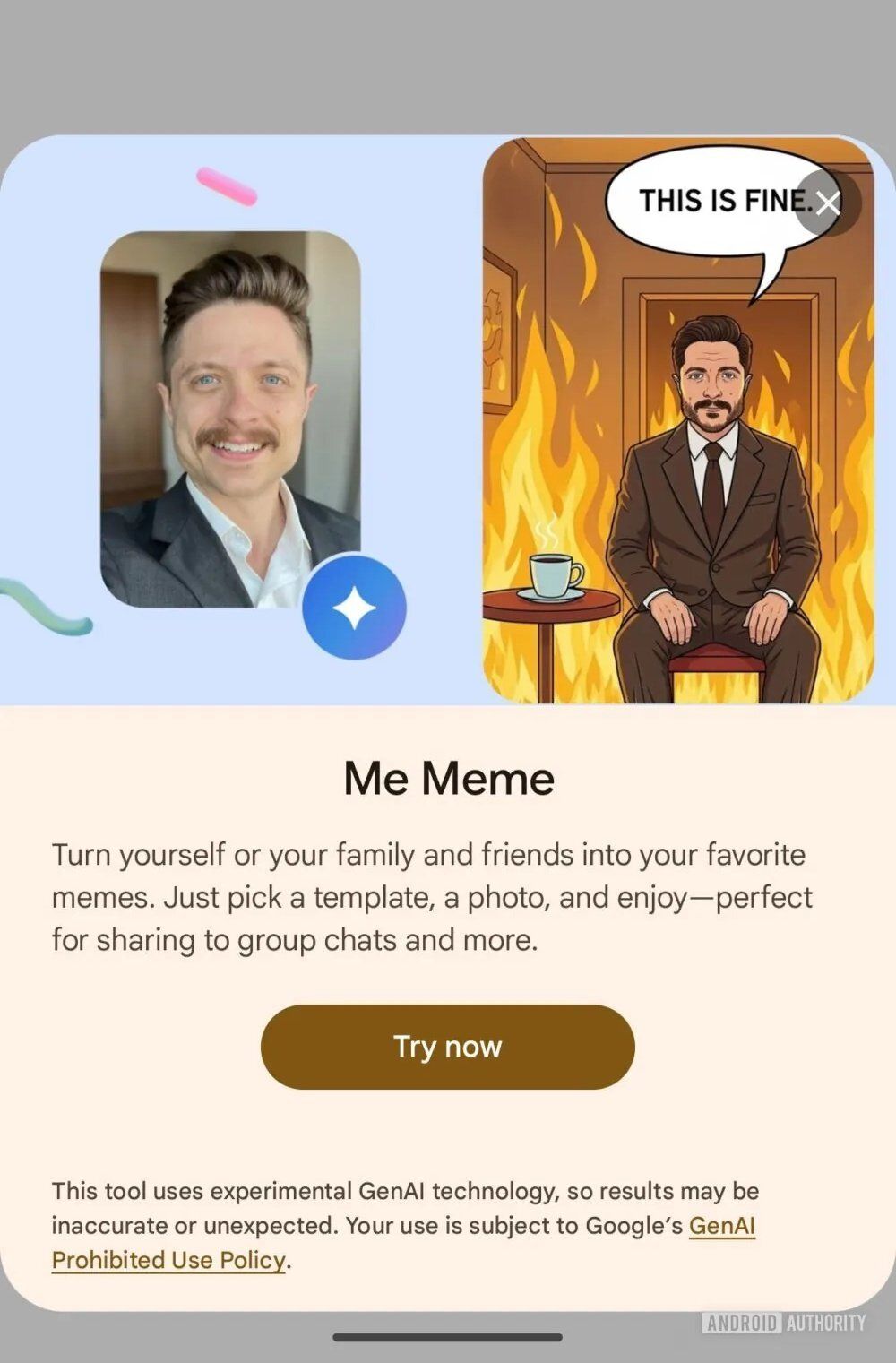

GOOGLE MEME THYSELF

It IS fine!

Speaking of Google—and admittedly, this is a bit of a silly one—they recently released a new feature in Google Photos called “Me Meme,” which will allow you to combine a photo template and an image of yourself to generate the meme.

Obviously, this feature is powered by NanoBanana under the hood. Admittedly, “Me Meme” isn’t fully rolled out, so you may not see it in your updated Google Photos app yet.

Again, as unserious a product launch as this is, the thing that jumped out to me is that this feature is clearly aimed at the “normie” market. Considering it is template-based, this is not designed for power users. But, it does leverage the power of NanoBanana.

While I don’t think that this particular feature is going to have a viral mainstream moment like OpenAI’s Sora Cameo launch, it is products and features like Me Meme that help introduce the technology to a wider audience.

But also, get ready to be tagged in “Me Meme” Facebook posts from that one Great Aunt who you never see, but who still thinks that everyone is on Facebook.

New Model

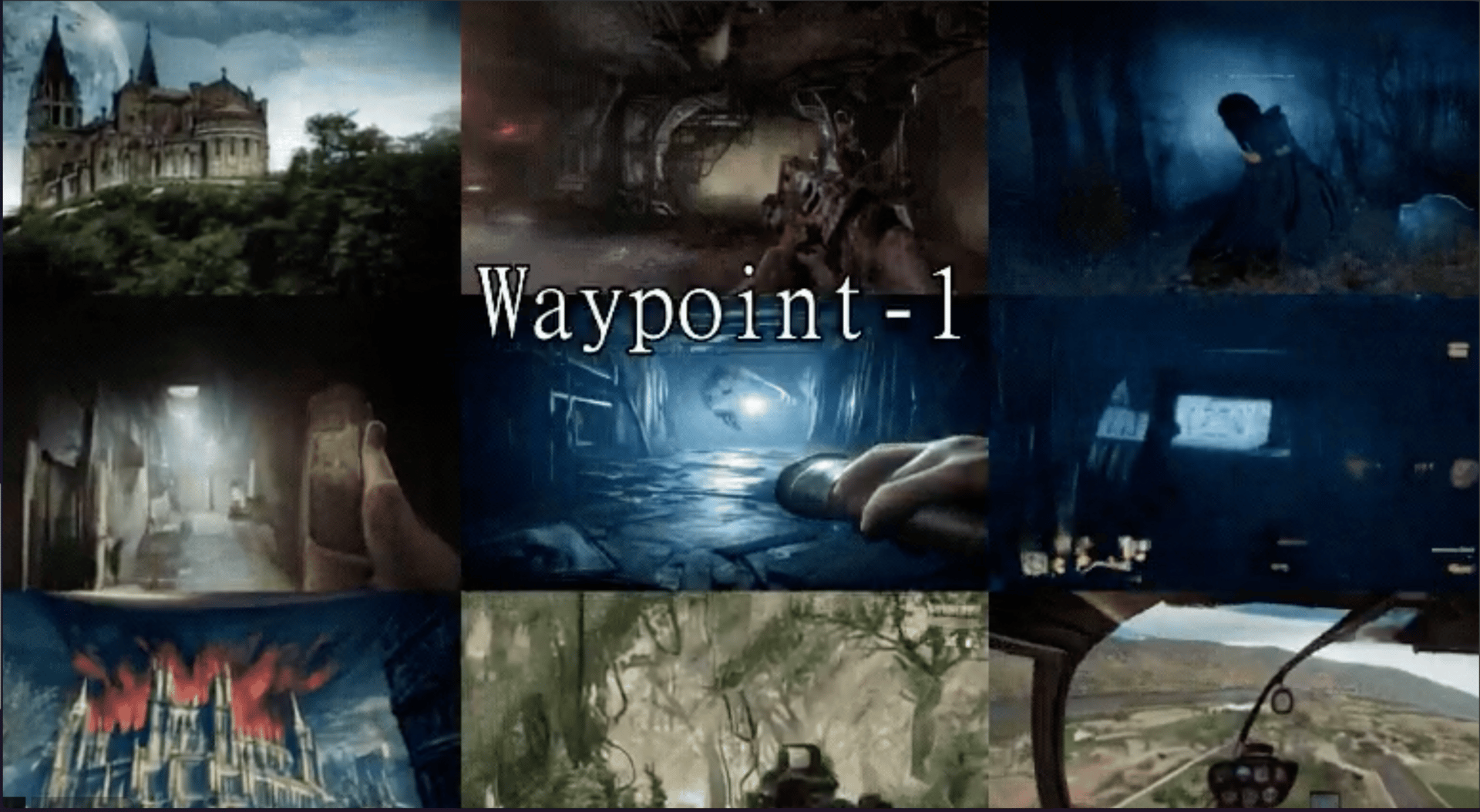

A World Model That Runs Locally?

Overworld has released a research preview of a real-time, local-first world model called Waypoint-1, and it is built for interactive, playable AI worlds.

It runs at 60fps on consumer-grade hardware and maintains a world in memory, updating it continuously as you move and act, rather than generating isolated frames or clips. Additionally, the model is mod-friendly and built to be playable and customized.

Now, as a note on that 60fps: I do want to point out that it is generating at 360p. And when they say “Consumer Grade,” they do mean an RTX 5090—which is technically consumer-grade, albeit top-of-the-line consumer-grade.

That’s not to take anything away from Waypoint-1, as look—it’s a locally running world model. That’s a great achievement.

I haven’t had time to check it out yet, but I’ll be looking to cover it next week, so stay tuned! In the meantime, if you want to get a head start (and you have an RTX 5090), you can download the model at Hugging Face!

PRO TIP

The Black Frame

This week’s PRO TIP comes from plasm0, who suggests that when utilizing a video generator with a First Frame/Last Frame feature, you should use a black frame as your first image and then your target image as the Last Frame.

Overall, while I’d consider this to be an experimental or exploratory technique, it is one that you might find strikes gold. Additionally, if you do end up with a good generation, you can then chain your original image as a First Frame for a separate generation.

COOL THING OF THE WEEK

No Center

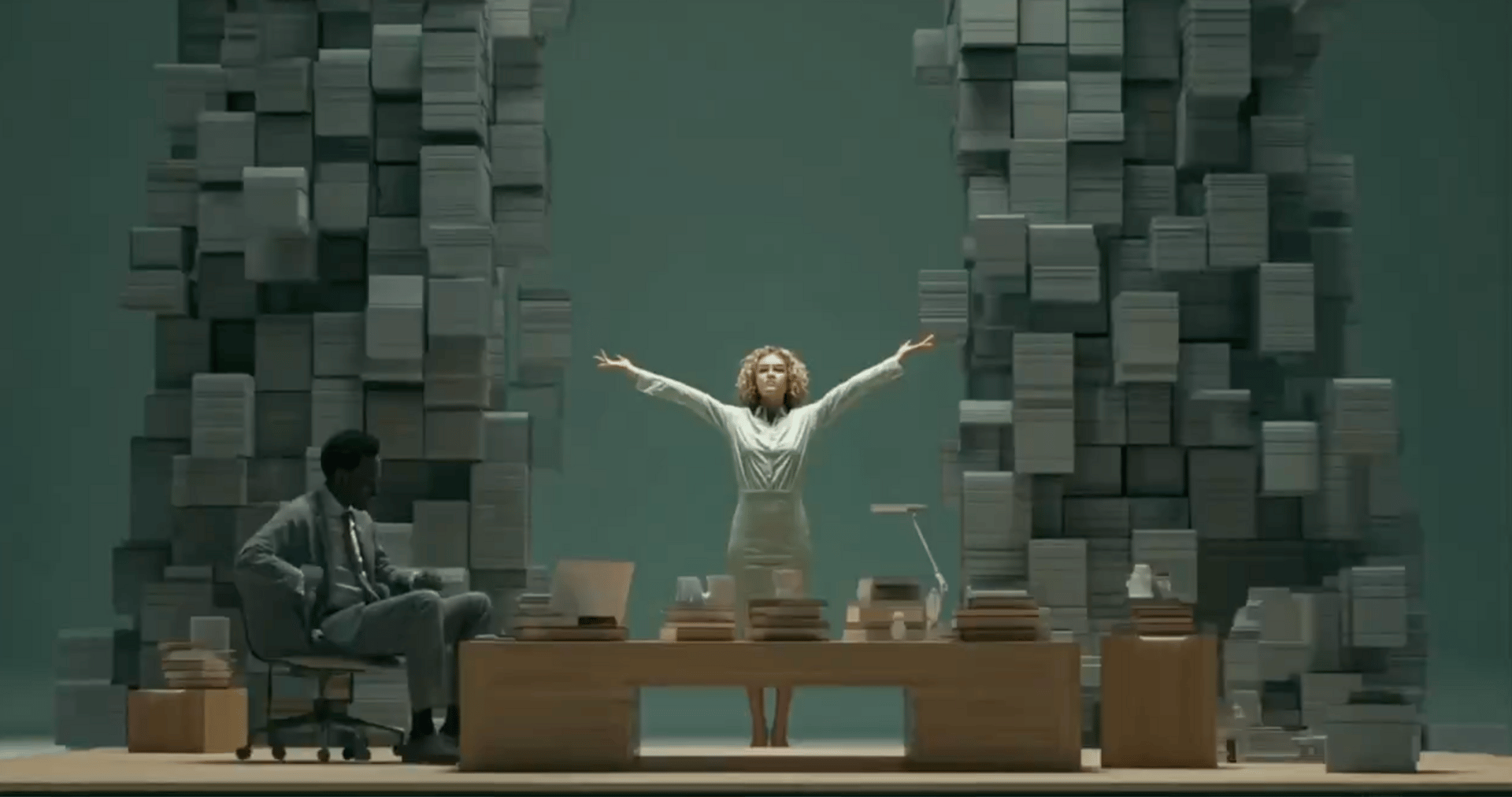

Cool Thing of the Week is an AI-generated music video from @A_MiYAGi_AI called No Center.

While, to be fair, I’m a little mid on the song itself (although it has grown on me with repeated viewings), the visual generation is very captivating.

The video was created with Kling for video generation, and to my eyes, it utilizes Midjourney for the source images. Although not explicitly noted, I suspect the Kling 2.5 model was utilized here, seeing as 2.6 does not yet have a First Frame/Last Frame feature.

And while there are a few moments of decoherence and/or "wonk," for me, the overall aesthetic design and First/Last Frame technique create a very mesmerizing end result.

I think it also goes to show that you don’t always have to use the latest/greatest model to create something unique and interesting!

FROM THE STUDIO

What I Covered This Week

Runway Gen-4.5 Image to Video released this week, and I was really honored to be asked to partner up with them on a launch video detailing its capabilities.

It was really cool to finally work with the Runway team after all these years on a video together. As a side note: Since this is the newsletter and I can be a little “looser” here—while I did get my fair share of comments accusing me of bias in my video, I do want to point out: This was NOT a review video.

Where I think the value in this video lies, besides showcasing examples that I personally generated, is that it gave me an opportunity to work with the Creative Directors at Runway and pick their brains on some tips and tricks that I was able to share with you all.

If you missed the video, you can check it out here: https://youtu.be/D9iTe6tbNXU

The second video of the week is one I've been meaning to make for a while. Lately, I've been seeing comments like, "This looks great, but I have no idea what you're talking about..."

It's actually pretty understandable. At this point, anyone who has been grinding in the game—well, we basically speak a totally different language, with our own jargon and shorthand.

So, I thought it was long overdue to make a "Starter Pack" for newcomers. It was surprisingly challenging to level the playing field—but also not overload a newcomer.

Anyhow, chances are: this video is NOT targeted at you, but I'd appreciate it if you gave it a watch. If nothing else, to help out the newcomers in the comments, or leave some other tips and options for them to explore.

You might not agree with all my picks, and that's fine: Please, again, leave your own for the tenderfoots to find!

Again, the point of this video was not to overload the freshman class—but I'm hoping the comments section becomes an additional resource for them!

This video is probably going to struggle to find its footing in the YouTube Algorithm (all hail its mighty glory… for the Algo is always watching!), so if you could take a moment to give it a view and a like, it would be much appreciated!

LINK:https://youtu.be/FJxn4-X0uAM

THAT’S A WRAP!

So there we go! Another one out the door!

If you have any feedback or anything you’d like to see, please let me know: [email protected]

Or, just drop a comment on one of the videos! I honestly do see them all!

As Always I thank you for Reading…

Tim